It has been a while since Plat_Forms 2012 and today we want to share with you what we have been doing in the meantime.

We have collected an enormous amount of data (interviews during the contest, code, version archives, running solutions, questionnaires, and more) and haven't finished our evaluation yet. In particular we sofar cannot draw any platform-specific conclusions. As you might remember, Plat_Forms 2012 wanted to find a new platform notion that is not tied to a particular programming language but based on characteristics of the technology stack. This turns out to be a difficult task that we are still working on.

In the following three weeks we will therefore simply show a few per-team charts without comment.

What we do have is data on the type of activity the teams were performing at the moment of our interviews (every 15 minutes), data on the completeness of the teams' solutions, and data on the robustness of the solutions.

What's missing and currently being worked on amongst others is data on the maintainability of the solutions, data on the static structure of the solutions (several notation-specific scanners still need to be written), and of course the attempt to explain the observations with the technology employed. So stay tuned for updates.

Robustness

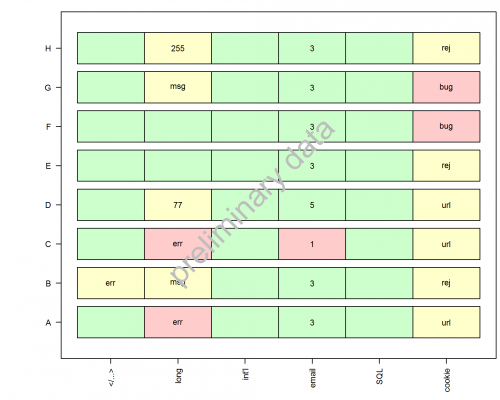

For our robustness tests we stressed the solutions with different uncommon inputs -- some valid, others invalid. Figure 1 shows our observations.

Figure 1: Results of our robustness tests. Light red stands for broken, yellow for acceptable and green for OK. We submitted input with HTML tags ("</...>" column), veeeeeery long character strings ("long" column), Unicode characters ("int'l" column), 5 different types of invalid email addresses ("email" column), strings containing SQL quote characters ("SQL" column), and we disabled cookies before trying to login ("cookie" column).